I Made an API

I’ve blogged multiple times in the past about using Power Automate to automate posting blog posts and other things to social media. While it was good for what it was, and Power Automate does a lot of things really well, it just wasn’t fully what I wanted, especially around the process of creating the content of the blog post. For Mastodon and Bluesky especially, figuring out how to format the post and correctly add things like images, and clickable links and hashtags just isn’t something that works well in the Power Automate process flow. I decided to rework it all as a .NET API. It seemed like another great project for an AI tool like Github Spec Kit.

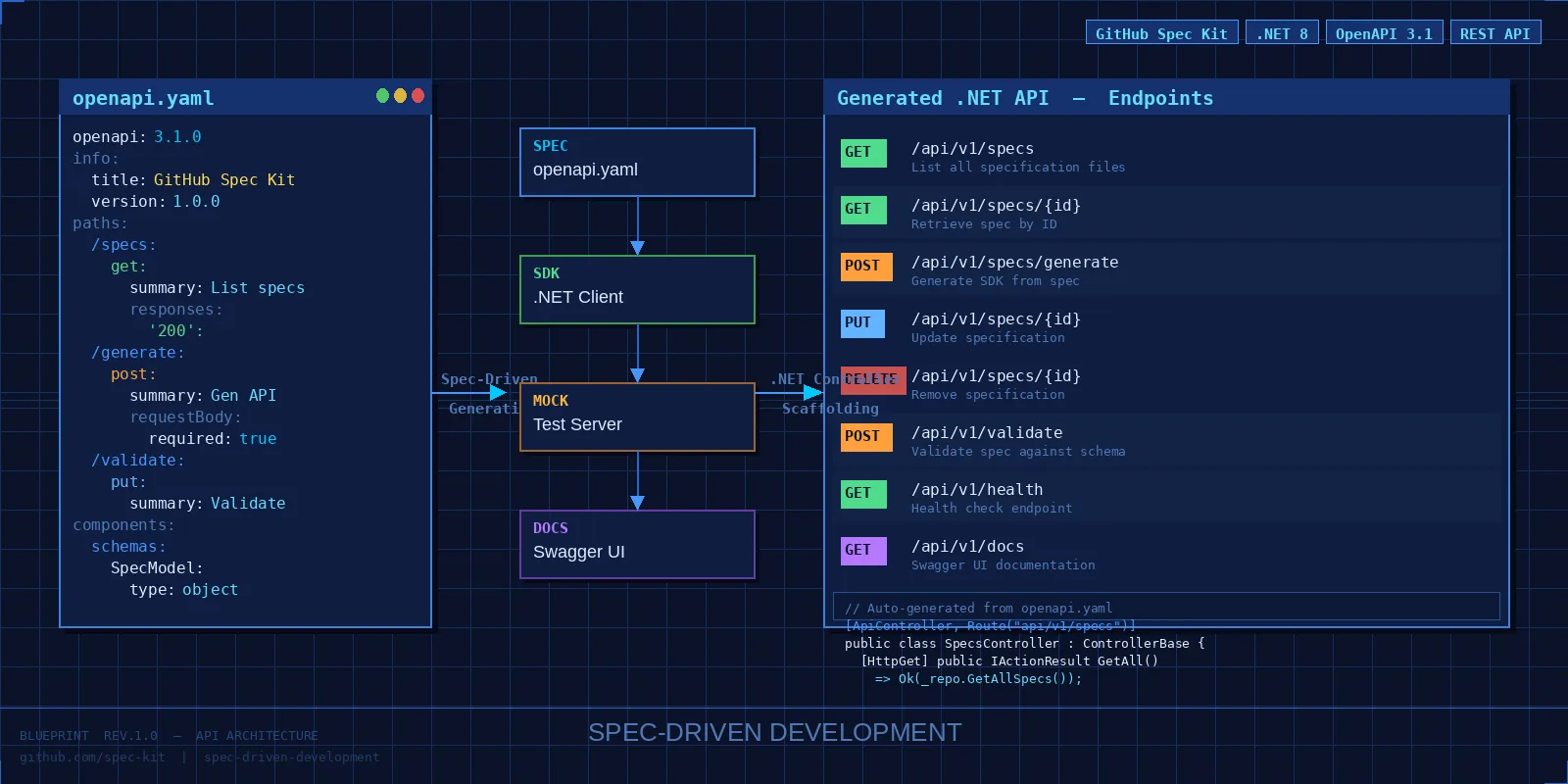

Github Spec Kit

For those of you unfamiliar with Github Spec Kit, it’s a set of scripts to help guide your favorite AI coding assistant along the process specification driven development (SDD) in detailing out the requirements of a project to great detail before a single line of code gets written. If you’ve done anything at all with AI coding tools, you know that the more detail and context you can provide, the better the output from the AI agents. SDD helps you provide all of that context and detail so that your final code is much improved over “vibe coding”. You can read the linked blog post I wrote for my employer to get a better background for SDD and its concepts.

The basic pattern for Spec Kit once your project is set up is as follows:

- specification - The “user story”. You provide as much detail on the feature requirements as possible. For the first specification you describe the MVP.

- plan - The technical details. In this step you provide the technical specifications that you want the code to make use of, including things like the specific language (C#, JavaScript, etc), the project structure, any libraries or APIs to use, and so forth.

- tasks - Tell the AI to take the specification and technical plan and turn that into a list of tasks. It will order, prioritize, and group those tasks appropriately.

- implement - The actual code gets written, whether by AI agents or developers or both

- PR and deploy - Review the code, sign off, and deploy it.

For each feature specification, you iterate through those steps with the help of Spec Kit. In the standard Spec Kit project, you’ll find a subfolder in the specs folder for each specification round that includes all the related documents. That’s one of the benefits of Spec Kit. All the documentation lives in the same repo as the code. And all of the documents are living, breathing, and updatable. It’s not like most projects where a document gets created, stuffed off into Sharepoint somewhere, and then forgotten about forever.

So let’s get into what Spec Kit helped me create. For reference, I mostly use Claude Opus 4.6 as my AI agent at the moment, and most of this API was created with that one. Spec Kit lets you use just about any AI agent you want to.

Project Structure

src/

├── BarretApi.Api # FastEndpoints API host

├── BarretApi.AppHost # Aspire orchestration & configuration

├── BarretApi.Core # Domain models, interfaces, services

├── BarretApi.Infrastructure # Platform clients (Bluesky, Mastodon, LinkedIn)

└── BarretApi.ServiceDefaults # Shared Aspire service defaults

tests/

├── BarretApi.Api.UnitTests

├── BarretApi.Core.UnitTest

└── BarretApi.Integration.Tests

You can see the project structure that it came up with. It’s an Aspire based project with a .NET web API project and a core and infrastructure pair of class libraries for the common code. Pretty standard stuff.

This API is just for my use (though I’ve made it public so others can see and fork it if they want), so security will just be through a shared secret key passed in the header, which I’m not gonna share with you, of course. It’s my API, not everyone else’s.

I’m also making use of Azure storage tables to hold a few things, such as a tracking table to keep track of which blog posts I’ve flung off to the socials and a refresh token for LinkedIn, because LinkedIn makes using their API a pain in the butt.

In runs in Aspire locally, and gets deployed to an Azure Linux App Service instance for my Power Automate flows to call. I still use PA to schedule and run the API calls. I might at some point add a .NET scheduler to the API and remove the PA need entirely, but it’s fine for now.

So far I’ve added about a half dozen endpoints to replace various Power Automate flows, each to carry out a certain task for me.

/api/social-posts

This one creates a social media post and has the capability to post to BlueSky, LinkedIn, and Mastodon. I only use those three for my tech related posting, so that’s all I need. I won’t add Twitter. Ever. It lets you pass in images as objects or as URL references, a list of hashtags, and the text of the post. It also requires that every image have an alt-text, because every image MUST HAVE ALT TEXT.

My future plan involves adding the ability to schedule posts, but I haven’t added that yet.

/api/social-posts/upload

If I need to attach the image as multipart/form-data, I use this one. Otherwise it’s the same as the other one

/api/social-posts/rss-promotion

This one is tied to my blog. It checks my blog RSS feed and automatically posts to social media when there is a new blog post detected. I have some customizations in my blog’s RSS feed, so this one won’t work with a standard RSS feed correctly at the moment. I did it this way because my Hugo blog uses both categories (which get added to the default RSS feed) and tags, which I had to add as a custom element to my RSS feed. It also tracks what posts got posted and when, so that a few hours later it can post a “did you miss it” kind of post.

My future plan for this one is to create a version that will work from a standard Atom 2.0 RSS feed so I can use this concept elsewhere. I also need to to customize it a little bit with some headers. Right now it just posts the post again on the “did you miss it” post. I want to add a little extra message to it.

/api/social-posts/rss-random

Another one tied to my blog. It gets the whole RSS feed and then picks a post at random to promote as a “From the archives” type post. I can filter by tags and timeframe. One thing I still need to add to it is a kind of tracking table so that it doesn’t repost the same blog post too frequently. Maybe some kind of time frame parameter like “don’t post anything that has been posted in the last 4 months or something”. Not sure, yet. It’s on the to-do list.

/api/social-posts/nasa-apod & /api/social-posts/satellite

I like NASA’s public API. Unfortunately, the recent stupidity of the current administration has caused some of this to stop working due to cut staff and other budget cuts. However, two APIs that still work are the Astronomy Picture of the Day (APOD) and Global Imagery Browse Services (GIBS). So I have a couple of fun little tools that make use of that.

The nasa-apod endpoint just retrieves the APOD picture for today and posts it to my socials. It’s always a cool picture. This was a real pain to do in Power Automate because sometimes it’s not a picture, but a video. It was easy to do this in .NET, and trying to fix this PA flow was the bigger motivator in creating this API in the first place.

The satellite endpoint retrieves a satellite image and posts it to my social. You can pass in bounding box latitude and longitude and the type of image and it will retrieve the latest image that covers that area (usually a few hours to a couple days old), and then posts that to my socials.

/api/word-cloud

I came across this API that generates a word cloud image from a web page. It was pretty cool, and I liked the output. However, the service that hosts the API required a credit card, even for a free account. Nope. Not gonna happen. So I had the AI create my own endpoint. The result was pretty good, and if you look at my blog posts, on the right side, you’ll see I’ve added the results for each post.

/api/linkedin

There are 3 endpoints here related to making LinkedIn’s API work. LinkedIn makes their API a pain, and you can’t just create a permanent token to use for auth, like you can for Bluesky and Mastodon. They require signing in with credentials, which gives you a token that expires. This has always been one of the biggest pain points with using LinkedIn on Power Automate. The credentials expire after a couple months and any related flows break.

So I went round and round with the AI to come up with a solution. I log in once, it gets the token, and it can use that token to get a refresh token. Supposedly this means I only have to log in once a year instead of every 2 or 3 months. Not sure, since I just created this API over the last week or so. We’ll see how it goes.

It does store the refresh token in Azure table storage for the moment. At some point I should probably look at upgrading that to Key Vault, but it’s not that big a deal just yet. Future plans.

Future Plans

I’ve got a couple of other ideas that I want to implement with this initial API setup. For instance, I recently came across Dice Bear. It’s a site to generate random avatars to use for gaming accounts or whatever. They have a pretty cool API and I’m thinking about how I might use that to generate random avatars of myself to use for various things.

I’m also working on a couple of side projects and once those start to get out into the world I want a way for people to be able to easily submit support and idea tickets. So I want an endpoint that will automatically generate a Github repo issue from these requests. I have no plans to make those repos public, so I need some other way to automate that process.

I’m also considering a couple of other ideas that are rummaging around in my brain. We’ll see what percolates up.

Summary

Creating this was driven by a couple of factors. First, as I mentioned, some things are hard to do in Power Automate, and are just easier to implement in .NET code. I mentioned the difficulty in building a social media post in PA. Other issues around working with the image files pop up from time to time as well. And the other motivating factor was that the LinkedIn connector for Power Automate has been broken for about a month.

Creating this API isn’t something I probably would have invested in if I was writing all the code myself from scratch. But given the recent improvements in AI coding agents and the introduction of SDD tools like Spec Kit, it has become something that doesn’t take up a whole lot of my time. I give Spec Kit some instructions, let it go to work, test the results, and iterate. It’s a few minutes here and there of my time and not hours of slinging code. It does take some effort review the results and adjust course. But like any code review, it’s usually nowhere near as much time as writing all the code yourself.

I wanted something better, easier, and more stable for automating social media posts. I got it, and it works pretty well for what it is. As I keep finding things that are useful to me, I’ll throw them in as well. Maybe you’ll find it useful too.

Here’s a link to the repo if you’re interested.